Six Septembers reading group

Six Septembers: Mathematics for the Humanist

Patrick Juola and Stephen Ramsay

Introduction

A Thousand Plateaus

This is a book on advanced mathematics intended for humanists.

We could defend ourselves in the usual way; everyone can benefit from knowing something about mathematics in the same way that everyone can benefit from knowing something about Russian literature, or biology, or agronomy. In the current university climate, with its deep attachment to “interdisciplinarity” (however vaguely defined), such arguments might even seem settled.

But we have in mind a much more practical situation that now arises with great regularity. Increasingly, humanist scholars of all stripes are turning their attention toward materials that represent enormous opportunities for the future of humanistic inquiry. We are speaking, of course, of the vast array of digital materials that now present themselves as objects of study: Google Books (rumored to be over twenty million volumes at the time of this writing); vast stores of GIS data; digitized census records; image archives of startling breadth; and above all, the World Wide Web itself, which may well be the most important “human record” of the last five hundred years.

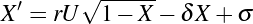

We have great confidence that the humanities will not only benefit from the digital turn, but that it will develop its own distinct methodologies for dealing with this sudden embarrassment of riches. At the same time, the nature of that material has created a world in which English professors are engaged in data mining, historians are becoming interested in ever more advanced forms of regression analysis, librarians are learning to program, art historians are exploring image segmentation, and philosophers are delving into the world of computational linguistics.

Or rather, some are. The field now known as “digital humanities”—a strikingly interdisciplinary (and international) group of scholars engaged in such pursuits—remains a comparatively small band of devoted investigators, when compared to the larger and older disciplines from which they come. The reasons for this should be obvious; all of the techniques mentioned above require that one stray into intellectual territory that was until recently the exclusive province of the sciences (whether computer, social, or “hard”).

The humanist scholar who undertakes to study data mining, advanced statistics, or text processing algorithms often approaches these subjects with great optimism. After many years spent studying subjects of comparable complexity in literature, history, or ancient languages, he or she certainly has reason to believe that whatever knowledge is demanded will become tractable with the right amount of determination and hard work. Data mining is complicated, but so is French literary theory. Everyone has to start somewhere.

But that attempt often ends badly. A humanist scholar who tries to read an article in, say, a computer science journal—or even, for that matter, an introductory text on a highly technical subject—will quite often be brought up short the minute things get mathematical (as they almost inevitably will). There is much that the assiduous student can glean with the proper amount of effort in such situations, but when the equations appear, old phobias appear with them. It might as well be Greek. It would be easier if it were Greek.

We have very particular ideas about what is lacking here. In our experience, the problem stems from two deficiencies:

- Humanists without training in the formal notation of

mathematics—notation which in nearly every case values concision over explanatory transparency—cannot make their way through an argument that depends on that notation. And there is, in general, no way to “figure it out” without that training. - Humanists often lack the proper set of concepts for dealing with mathematical material—concepts that are independent of their specific manifestation in equations and proofs, but without which mathematical arguments become mostly unintelligible.

This book does not explain all of the mathematics that anyone is likely to encounter in the humanities. That would be impossible, to begin with; part of what makes the digital humanities so exciting is the constant eruption of lateral thinking that allows a scholar to see some tool or technique, apparently unrelated to the humanities, as a new vector for study and contemplation. Our purpose, instead, is to impart the concepts that we believe underlie most of the mathematics that you are likely to encounter, and to unfold the notation in a way that removes that particular barrier completely. This book is, in other words, a primer—a book that you can use to develop the skills and habits of mind that will allow you take on more complicated technical material with confidence.

Some of what we talk about in this book is directly applicable to problems in the humanities, but that is not our main concern. Much of it is devoted to material that might never appear in the ordinary course of “doing” digital humanities, sociology, game studies, or computational linguistics. Yet we firmly believe that all of what we discuss here is in the back of the mind of anyone who does serious technical work in these areas. This book, to put it plainly, is concerned with the things that the author of a technical article knows, but isn’t saying. Like any field, mathematics operates under a regime of shared assumptions, and it is our purpose to elucidate some of those assumptions for the newcomer.

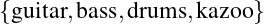

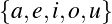

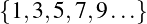

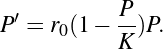

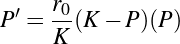

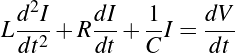

The individual subjects we tackle are (in order): logic and proof, discrete mathematics, abstract algebra, probability and statistics, calculus, and differential equations. This is not at all the order in which these subjects are usually taught in school curricula, and indeed, it is possible to take a course of study that does not include all of them. Our ordering is borne of our own sense of how best to convey the concepts of mathematics to humanists, and is, like mathematics itself, strongly cumulative. We have made no attempt to write chapters that can stand on their own, and would therefore strongly suggest that they be read in order.

Of course, any of these areas could be the subject of a multi-volume series of books. In fact, all of them are, and we recommend additional reading where appropriate. Our aim here is to introduce the major concepts and terminology of the areas, not to provide a detailed exploration of the technical foundations or even necessarily to catalog the major results in the areas. Instead, we present concepts and vocabulary appropriate to the first few weeks of—a September, so to speak—of a rigorous, university-level course, but (we hope) with a presentation more focused on understanding and application and less on proof techniques.

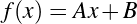

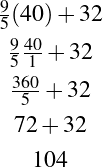

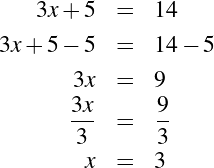

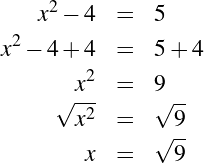

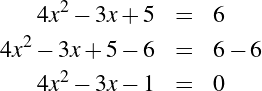

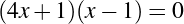

We assume knowledge of nothing more than basic algebra (along with, perhaps, elementary geometry and a general sense of the main ideas in trigonometry). We are aware, of course, that for most of our readers, decades have passed since these subjects were first unfolded. We provide a bracingly brief refresher course in algebra in the appendix, though you may find it helpful to turn to it only when needed.

This book is written by two humanists. One is a professor of English who found himself drawn to the digital humanities, and then struggled mightily (for years) through the very situation we describe above before gaining facility with the subject. The other is a professor of mathematics and computer science who has spent his entire career in the company of humanists, struggling to understand their questions and their methodologies. This collaboration has been sustained at every turn by certain attitudes which hold in common, and which we have tried to bring to these pages.

First, we have nothing but contempt for the phrase “math for poets”—a sobriquet we consider only slightly less demeaning and derisive than “logic for girls.” The implication that this idea ensconces (often, sadly, in course titles) is one that we reject, because it implies that the subject can only be understood if certain, largely negative things are assumed about the student’s abilities. We assume that our readers are motivated adults who need explanations, not some radically reframed version of the subject that makes rude assumptions about “the way they think.” We have tried very hard to explain things as clearly as we can, but we do not shy away from “real” mathematics. Since it is an introductory text, we are aware not only of roads not taken, but of simplifications that scarcely convey the depths of the subjects we’re discussing. But again, we have taken this route in order to lay proper emphasis on concepts, not to present a watered-down version of a complicated subject. The “for” in our title is as it appears in the phrase, “We have a gift for you.”

We also believe firmly in autodidacticism. Aside from having a very noble tradition in mathematics (some of the greatest mathematicians have been, properly speaking, self-educated amateurs), this mode of learning becomes a necessity for those of us long out school and without opportunity to undertake years of organized study. At the same time, we believe in community. We aren’t so bold as to think that we’ve hit upon the most lucid explanations possible in every case, and we encourage you to seek out mentors who can help. We even provide a forum for such discussions on the website that accompanies this book.

Finally, we believe in the humanities. Both of us have an enduring fascination with mathematics, but our passions as scholars remain focused on the ways in which this subject holds out the possibility of fruitful interaction with the study of the human record. The hope we have for this book is not that it will create new mathematicians, but that it will embolden people to see new possibilities for the subjects to which they’ve devoted themselves.

To Deliver You from the Preliminary Terrors

Having just extolled your virtues as a serious, motivated, autodidact, we nonetheless need to admit that reading mathematics can be a difficult matter—even in the context of an introductory text like this.

We borrow the title of this section from a similar note to the reader in Silvanus P. Thompson’s 1910 book Calculus Made Easy—a widely acknowledged masterpiece of clear and elegant mathematical exposition that has, in many ways, inspired our own work. We especially like the subtitle: Being a Very-Simplest Introduction to Those Beautiful Methods of Reckoning Which Are Generally Called by the Terrifying Names of the Differential Calculus and the Integral Calculus. We are not so far from being beginners in this subject as to have no sense of the terror to which Thompson refers; it visits everyone, sooner or later.

You will almost certainly say to yourself at some point: “Wait. I am lost.” We believe firmly that there are several ways out of this pathless wood—the chief one being the avoidance of those conditions that get us there in the first place. In particular:

- We who are used to reading books and scholarly articles in the humanities seldom get “stuck” when reading something. If we miss an idea, we can usually recover as the narrative proceeds. Mathematical reading is not like this. The subject is by nature cumulative, and some of the apparently trivial things we say at the beginnings of chapters have great moment later on. It is therefore necessary to check your understanding constantly: “Did I get that? Do I really understand that?” We really can’t emphasize this enough.

- As a direct corollary: Mathematical reading is very slow. It is not at all uncommon for a professional mathematician to spend weeks “reading” an eight-page article. We doubt that anything in our pages will require such patience, but we want to caution against too brisk a pace and assure you that even the most gifted mathematician is moving at a fraction of the speed with which an ordinary reader moves through, say, a novel.

- “Mathematics,” to quote one of our beloved instructors, “is not a spectator sport.” We have no desire to make our subject feel like homework, but there’s no getting around the fact that to understand mathematical ideas, you have to do mathematics. We therefore humbly suggest that you seek out problems to solve. Nothing will elucidate the subject faster than trying to work out how a given concept applies to a given problem. We provide some sample exercises on the website that accompanies this book, but the best problems are those that occur to you as you’re working in some other area (say, looking at the mechanics of a game or trying to understand a social network). Our emphasis, once again, is on concepts, but many of these same concepts emerged in the context of real-world problems to be solved. There’s no substitute for re-creating the conditions under which those insights first became manifest.

We are teachers, and we suspect that many of our readers are as well. Even if you are not a teacher, you can easily understand something that every experienced teacher knows very well: that when it comes to teaching complicated subjects, half the battle is getting students to recognize themselves as the sort of people who can do it well. We have to get to “I got it” early and often in order to build the confidence that is required to proceed.

The temptation, when one becomes lost, is to assume that it is your fault—that you’re just not built for this. We think that many things may have gone wrong in such cases (the authors’ lack of clarity among them). But we think (and there is research to prove it) that human beings are built for mathematical thinking in the same way that human beings are built for speaking and using tools. When the moment comes (and it will), our advice is to heed the points above and to recognize that people with significantly fewer intellectual gifts than yourself have successfully mastered this material. Thompson offers an ancient proverb as the epigraph to his text that is beloved by scientists, engineers, and mathematicians the world over, and which we think is a good thing to repeat during the dark night: What one fool can do, another can.

1 Logic and Proof

The great twentieth-century British mathematician, G. H. Hardy

(1877–1947), once wrote, “I have never done anything ‘useful.’ No discovery of mine has

made, or is likely to make, directly or indirectly, for good or ill, the least difference to the

amenity of the world” [5]. Yet he did allow that one of his discoveries had indeed been

important—namely, his “discovery” of the great Indian mathematician Srinivasa

Ramanujan (1887–1920). Ramanujan, with almost no training in advanced mathematics,

had managed to derive some of the most important mathematical results of the last five

centuries while working as a clerk at the Account-General’s office in Madras. He had sent

some of his work to several members of the mathematics faculty at Cambridge, but only

Hardy had recognized Ramanujan as a genius. They would become lifelong friends and

collaborators.

For Hardy, the beauty of mathematics was to be found in the layered elegance by which theorems are established from more elementary statements. Ramanujan, by contrast, seems to have found the tedium of proof too burdensome. “It was goddess Namagiri, he would tell his friends, to whom he owed his mathematical gifts. Namagiri would write the equations on his tongue. Namagiri would bestow mathematical insights in his dreams” [8]:

Hardy used to visit him, as he lay dying in hospital at Putney. It was on one of those visits that there happened the incident of the taxi-cab number. Hardy had gone out to Putney by taxi, as usual his chosen method of conveyance. He went into the room where Ramanujan was lying. Hardy, always inept about introducing a conversation, said, probably without a greeting, and certainly as his first remark: “I thought the number of my taxi-cab was 1729. It seemed to me rather a dull number.” To which Ramanujan replied: “No, Hardy! No, Hardy! It is a very interesting number. It is the smallest number expressible as the sum of two cubes in two different ways.” [5]

For Hardy, the story was undoubtedly meant to stand as a tribute to his friend. For surely, only someone with a very special relationship with numbers could possibly make such an observation spontaneously. But for all that, one can almost imagine Hardy’s thoughts on the ride home. Is it true? And is it, in fact, interesting?

We can settle the first question with a proof—a demonstration that the statement must be true (or that it must be false). To do this, we would have to show that 1729 is in fact the sum of two cubes, that there are exactly two such cubes that sum to 1729, and that no smaller number can be created this way. The second question is undoubtedly a more subjective one, though we might discover that the proof itself reveals something else about mathematics, or that the result can be used in other, perhaps more “interesting” demonstrations.

We have, then, a conjecture: 1729 is the smallest number expressible as the sum of two cubes in two different ways. Having proved it, it would become a theorem. If that theorem could in turn be used to establish further theorems, it would become a lemma. The study of those methods and processes by which arguments are made, theorems established, and lemmas formed is called mathematical logic.

The idea of proving ever-more-elaborate statements using the results of earlier proofs is familiar to most people from secondary-school geometry. Euclidean geometry, in fact, provides an excellent introduction not only to logic, but to the place of logic within mathematics. Students who enjoy the subject will remark on its beauty—on the way that things “fit together.” Yet others are quick to point out that there is something fundamentally wrong with a system that has to define basic things like the notion of a point as (to quote Euclid) “that which has no part” and a line as a “breadthless length.” [4] Even the most enthusiastic student will at some point ask why it is necessary to prove so many things that are obviously true at a glance.

Such questions hint at problems and conundrums that are among the oldest questions in philosophy. At stake is not merely the virtues and limitations of this or that system, but (one suspects) the virtues and limitations of our ability to reason about systems in general. Behind the many methodologies (or “logics”) that have been proposed is a set of basic questions about methodology itself, and about the nature of those epistemological tools that we have traditionally employed to establish truth and reject falsehood in matters ranging from the existence of triangles to the proper form of government.

Since it is the purpose of this book to present mathematics to humanists, we will focus here on the tools themselves. Our intention, however, is not to put these larger questions into abeyance, but rather to draw them forth more forcefully by showing how these systems actually work and what it is like to work with them. Toward the end, we revisit some of the major philosophical insights concerning logic and proof for which twentieth-century mathematics may be most well remembered.

1.1 Formal Logic

Logic usually traffics in the truth and falsehood of statements. To say this is already to restrict our view, since not all statements are susceptible to such easy classification. Some statements appear to have nothing at all to do with truth and falsehood (“Look out for that tree!”); others might have something to do with truth and falsehood, but are not themselves true or false (“Was that tree there last week?”).

In technical terms, a statement that can be true or false is said to be a proposition (or to have propositional content). This puts us immediately in more broadly philosophical territory, since some would like to declare a law of the excluded middle and say that mathematics is exclusively concerned with statements that are either true or false. This might seem an undue restriction; surely a system constrained in this way is already barred from the investigation of more nuanced problems. Yet propositions hold out a possibility that logicians have been unable to resist—namely, the idea that a statement might be “analytically” true or true by virtue of its structure. For this reason, one might view logic as the study of the structure of arguments. The goal is not so much to prove this or that, but rather to say that statements of a certain form—independent even of their content—must always serve to support a given argument.

1.1.1 Deduction and Induction

Though there are many logical systems, mathematical logicians traditionally distinguish between the two broad classes familiar to us from philosophy and science: deductive and inductive. Deductive logic, you’ll recall, is the process of making inferences from general premises to specific conclusions. If, for example, we know that (i.e. it is true that) all fish live in the water, then any specific fish—say, this particular goldfish—must itself live in the water. By contrast, inductive logic is the process of reasoning from specific instances to general conclusions. Every fish I’ve seen lives in the water, so therefore I conclude that all fish live in water.

This common distinction hints at the idea of analytical truth. Arguments based on deductive reasoning are guaranteed to be correct (provided we follow certain rules), while those based on inductive reasoning are not. So even if all the swans I have ever seen are white, the statement “All swans are white” can be demonstrated as false the minute an informed reader demonstrates the existence of black ones. Some logicians have suggested that this guarantee is a better criterion for defining the difference between deductive and inductive logic. It is, after all, possible to reason to some kinds of general statements from specific examples. Seeing a goldfish living in a fishbowl, I can say with certainty that the statement “all fish live in the ocean” cannot be true, but under the revised criterion, this would be considered a deductive argument.

1.1.2 Validity, Fallacy, and Paradox

Validity

But how can we tell if we are reasoning “correctly?” Or, to put it another way: How can we tell that certain arguments support their conclusions and that others do not? From a mathematical perspective, the first step toward answering questions like these necessitates careful and precise definition of the terms. Defining things doesn’t itself guarantee correctness (we could, after all, define things incorrectly), but definitions increase the likelihood that the operative assumptions are shared ones. The old Latin legal term arguendo (“for the sake of argument”) captures the sense in which definitions are put forth in mathematics.

For the sake of arguing about mathematics, then, we define a few words more restrictively than we do normally, and say that an argument is a series of propositions—which is to say, a series of statements that are either true or false. Propositions that the speaker believes to be true (or accepts provisionally as true) and which are offered for your acceptance or belief are called premises. The last proposition is conventionally called the conclusion. The tantalizing possibility, as we mentioned before, is that this structure has some kind of inevitability to it. Because the premises are true, the conclusion must be true.

We can structure and formalize our earlier argument as follows:

| (First premise) | A goldfish is a fish. | (1.1) |

| (Second premise) | All fish live in water. | (1.2) |

| (Conclusion) | ∴ Goldfish live in water. | (1.3) |

It should be intuitively obvious2 that the premises of this argument offer support for the conclusion, while the premises of the following argument do not:

| (First premise) | My friend’s cat is a Siamese cat. | (1.4) |

| (Second premise) | Some Siamese cats have blue eyes. | (1.5) |

| (Conclusion) | ∴ My friend’s cat has blue eyes. | (1.6) |

Such examples naturally seem almost insulting in their simplicity, but they go to the heart of the matter. Saying that something is “intuitively obvious” doesn’t guarantee that it is true. Such statements criticize our intuition (which might be another term for our experiential beliefs) in advance, in the hope that this appeal will bias us in favor of agreement. A great deal of substantive arguing in the real world depends on such appeals, and it is not at all cogent to say that such rhetorical moves are illegitimate. In the context of mathematical logic, however, it seems an unlikely path toward “structural truth.”

Unfortunately, at this point in our exposition, we don’t have anything better. Informally, of course, the problem appears to lie with the word “some.” If “some” Siamese have blue eyes, some of them might not have blue eyes, and we don’t know which type my friend has.

A valid argument is therefore traditionally defined as an argument where the truth of the premises entails the conclusion (that is, it is not possible for the premises to be true while the conclusion is false). Being rigorists by nature, logicians go further and say that these conditions must obtain not only in the world, but in “all possible worlds.” This latter stipulation attempts to move the question entirely beyond intuition and experience, even to the point of imagining radically altered circumstances in which the argument might not be valid. The argument about Siamese cats is invalid, because (irrespective of the actual cat) my friend’s cat might have green eyes. But twist and turn as we might, we can’t invalidate the first argument.

Declaring an argument invalid doesn’t necessarily mean that the conclusions are wrong. The following argument is identical in structure to the second (lines 1.4–1.6), and yet the conclusion is still true:

| Paul Newman is a person. | (true) | (1.7) | ||

| Some people have blue eyes. | (true) | (1.8) | ||

| ∴ Paul Newman has blue eyes. | (true) | (1.9) |

It is invalid, not because it reaches a false statement, but because identically structured arguments can lead to false conclusions.

Similarly, an argument can be valid without its conclusions being true:

| Paul Newman is a person. | (true) | (1.10) | ||

| All people have seven eyes. | (false) | (1.11) | ||

| ∴ Paul Newman has seven eyes. | (false) | (1.12) |

In this case, we can see that at least one of the premises is false. Yet we can (with some effort) imagine a possible world where all people do have seven eyes and where Paul Newman is considered a person. On such a planet, Paul Newman would also have seven eyes.

A valid argument can be thought of as as a truth-making machine; if you put true propositions into it, the propositions you get out of it are guaranteed to be true. You can use this machine either to generate new truths, or to check (prove) that statements you already believe are in fact true.

Fallacy

Mathematical logic is noticeably strict when it comes to valid arguments; the rest of the world is far less so. Many highly persuasive arguments made in the context of business or politics (or even just ordinary conversation) fail the “possible world” criterion. Most commonly accepted scientific “truths” would likewise fail due to reliance on inductive (and therefore invalid) argument. Statistics, too, is mostly about assessing the degree of support offered by evidentiary structures that are formally invalid (again, because they are mostly inductive). Yet invalid arguments are often used illegitimately to sell things, propagandize, or simply to lie.

The tradition of compiling lists of fallacies—relatively convincing, but invalid arguments—is an ancient one. In some cases, the invalidity is rather obvious, as it is with the so called argumentum ad baculum (literally, “appeal to the stick”): “If you don’t believe this proposition, I will hit you with this stick.” But other fallacies are far more subtle, and provide useful illustration of the idea of argumentative structure.

The two valid arguments presented above both have the same general form. We can illustrate this by replacing phrases like “fish” or “people” with letters as placeholders, yielding the following structure:3

| All S are M. | (1.13) | |

| All M are P. | (1.14) | |

| ∴ All S are P. | (1.15) |

Suppose that one were to swap the terms of the second premise, like so:

| All S are M. | (1.16) | |

| All P are M. | (1.17) | |

| ∴ All S are P. | (1.18) |

The argument now has a pleasing symmetry, and yet it is jarringly invalid, as the following example makes clear:

| All money [in the United States] is green. | (1.19) | |

| All leaves are green. | (1.20) | |

| ∴ All money is leaves (and thus grows on trees). | (1.21) |

This fallacy is called the fallacy of the undistributed middle, and the symbolic version above illustrates why. An argument with an “undistributed middle” has the middle term—the one that does not appear in the conclusion—appearing only on the right side (or left side) of the sentence (the predicate). Once again, we can see that it is invalid without even knowing what S, M, and P are.

This kind of structure is often used in arguments to support the idea of guilt by association. For example, if all Communists support socialized medicine, and a candidate’s political opponent also supports socialized medicine, the candidate could use this in their campaign literature to “support” the argument that his or her opponent is a closet Communist. Yet even here, there are instances where the fully fallacious argument can be reasonably persuasive. For example, a prosecuting attorney might find it helpful to point out that the murderer had a certain type of DNA that matches the defendant, as evidence of the defendant’s guilt.

A full catalog of fallacies with accompanying illustration would be a full book in its own right and would take this chapter too far afield. However, we’d like to mention one fallacy that is considered extremely dangerous in mathematical logic: namely, the so-called fallacy of equivocation, which occurs when the meaning of a term shifts in the middle of an argument.

| A feather is light. | (1.22) | |

| What is light cannot be dark. | (1.23) | |

| ∴ A feather cannot be dark. | (1.24) |

Much of mathematical discourse is centered around exact definitions and their implications to prevent accidental ambiguity for this very reason.

Paradox

What of sentences that are neither true nor false? As we’ve already mentioned, some kinds of speech acts don’t have propositional content—questions, exclamations, orders, and so forth. Yet a broad category of sentences appear superficially to be propositions, but upon closer inspection cannot be. Such sentences are called paradoxes.

A classic example of paradox is the Barber Paradox. “In a small town with only one barber, the barber shaves all and only the men who are not self-shavers (i.e. do not shave themselves). Is the barber a self-shaver?” If the barber is not a self-shaver, then he must shave himself (because he shaves all the men who aren’t self-shavers), and therefore he must be a self-shaver. Conversely, if he is a self-shaver, then (since he shaves only men who aren’t self-shavers), he must not shave himself, and therefore is not a self-shaver.4

The paradox, then, is that if the sentence “The barber is a self-shaver” is true, then the same sentence must be false. If the sentence is false, then it has to be true. But it can’t be both true and false (the law of the excluded middle again), and so it therefore can’t be either true or false without contradicting itself. It is a paradox.

In practical terms, a paradox means that there is something wrong with your assumptions and definitions. In the twenty-first century, this paradox loses something of its punch when we note that female barbers exist. If you assume (implicitly) that the barber is a man, the paradox is genuine. However, if the barber is a woman, then the situation can be resolved by noting that the problem specifically states that she shaves “all and only the men”—i.e. the problem is explicit about the fact that her client list is exclusively male. Naturally, then, she doesn’t shave herself, but there is no paradox. She simply doesn’t shave herself—if she is shaved at all, someone else must do it for her.

Paradoxes have been hugely influential in mathematics precisely because they are so good at rooting out hidden assumptions and problems with definitions. Obviously, any logical scheme that permits the inference of paradoxes is to be avoided. As we’ll see later, one of the most earth-shattering insights in modern mathematics relies on the elucidation of a paradox.

1.2 Types of Deductive Logic

So in order to be able to analyze deductive arguments, it becomes necessary to represent them accurately and concisely. This section presents several different systems of representation and analysis, ranging from ancient Greek logic to the present day. We also present some of the notation of modern logic in common use in the literature of both mathematics and analytical philosophy.

1.2.1 Syllogistic Logic

Syllogistic logic, the subject of a collection of treatises by Aristotle, is among the most enduring and influential forms of logic. During the period that stretches from the high middle ages to the nineteenth century, nearly every area of intellectual endeavor in the West—from mathematics to theology—became heavily influenced by the basic terms and “cognitive style” of syllogistic logic.5 Even as late as 1781, Immanuel Kant—hardly an undiscerning thinker—could innocently declare that “since Aristotle, [logic has] been unable to take a single step forward, and therefore seems to all appearance to be finished and complete” [9].

A syllogism (from a Greek word meaning “inference” or “conclusion”) is the sort of argument we’ve been examining up until now: an argument based on two premises and a conclusion. The ancients discerned four basic forms that the statements of a syllogism could take:

- All A is B. (universal affirmative)

- No A is B. (universal negative)

- Some A is B. (particular affirmative)

- Some A is not-B. (particular negative)

The following sentences are all examples of A-type propositions:

- All cats are mammals.

- All basketball players are tall (i.e., are tall-things).

- All insomniacs snore

(i.e., All things-that-are-insomniacs are things-that-snore). - Bill Gates is rich

(i.e., All things-that-are-Bill-Gates are things-that-are-rich).

The following sentences would be I-type propositions:

- Some cats are black (we don’t specify which).

- One of my nieces is a champion speller.

- There are black swans in Australia (i.e., Some swans are black-things).

This is an O-type proposition:

- Not all lawyers are crooks

…and this is an E-type proposition:

- None but the brave deserve the fair

(i.e. No things-that-are-not-brave are things-that-deserve-the-fair)

With only four different sentence types, and three sentences in each syllogism, there are fewer than 200 different possible syllogisms, only some of which—fewer than 20—are valid. It is therefore possible to compile a table or list of all the valid syllogisms. Such lists were compiled during the Middle Ages, and various syllogisms given individual names. For example, the Barbara Syllogism we discussed above is made up of three A-type sentences:6

| Major premise-A | My sister’s goldfish is a fish. | (1.25) |

| Minor premise-A | All fish live in water. | (1.26) |

| Conclusion-A | ∴ my sister’s goldfish lives in water. | (1.27) |

Similarly, the Darii syllogism has an A-type major premise, an I-type minor premise, and an I-type conclusion:

| Major premise-A | All trout are fish. | (1.28) |

| Minor premise-I | No fish live in trees. | (1.29) |

| Conclusion-I | ∴ No trout live in trees. | (1.30) |

Enumerating all of the valid syllogisms was considered a good way of learning which ones were and were not “valid” arguments within this framework. A more powerful property emerged, however, when the valid syllogisms were rewritten as prescriptive rules about how to reason. For example, we can restructure the Barbara syllogism as:

If you have a pair of premises “All A are B” and “All B are C,” you may infer “All A are C” (without regard to the exact terms of A, B, and C).

This is an example of an inference rule. Such a rule can be used in one of two ways. First, it can be used, once again, as a truth-making machine. Given a pair of statements in the appropriate form, the “machine” can infer the appropriate conclusion. It can also be used, in reverse, as a system for checking the truth of a statement or the validity of an argument. If a statement is offered as a conclusion, we could check to see whether the pair of premises corresponds to a valid syllogistic structure. This checking can be done without detailed knowledge of the propositions in question; in fact, if the statements are offered in a sufficiently stereotypical form, it could be done by a computer with nothing more than text matching.

While the influence of syllogistic logic is everywhere evident in the systems which came to replace it, it has now more-or-less disappeared as a subject of intensive inquiry in philosophy and mathematics. That is due, at least in part, to evolving notions of what logic itself is about. Earlier, we described logic as a system for discerning the truth or falsehood of statements. While that is true, we can also think of logic as an attempt to provide rigorously defined meanings and interpretations for particular words. Syllogistic logic provides quite precise descriptions for words like “all,” “some,” and “none.” It falters, however, even with the introduction of a word like “or”:

| Either Timothy or Sasha is a cat. | (1.31) | |

| Timothy is not a cat. | (1.32) | |

| Sasha is a cat. | (1.33) |

This resembles a syllogism, but there is no way within the framework to get from a statement about some of the members of a group (the set of things that are either Timothy or Sasha) to a statement about the individuals in that group.

In general, the problem with syllogistic logic is not so much a matter of logical validity, but of expressive power. The revolution in logic that began in the nineteenth-century with the work of people like George Boole (1815–1864) and Augustus de Morgan (1806–1871) was occasioned not only by the quest for better “truth machines,” but by attempts to understand language and its relation to thought and meaning. As we shall see, it also led to revolutionary ideas about the foundations of mathematics itself.

1.2.2 Propositional Logic

The first development toward a more expressive logic moved the focus of concern from the terms of a proposition to the entire proposition taken as a whole. Consider the following:

- “Percy is a cat”

- “Quail are a kind of lizard”

- “Rabelais was the author of both Gargantua and Pantagruel”

- “Sasha is a cat”

- “ Timothy is a chinchilla”

These are, according to our earlier definition, propositions, in the sense that they can be either true of false. We can begin the construction of a propositional logic by attempting to discern the structures that govern the relationships among such propositions.

We can begin with the observation that to every proposition, there is a contrary proposition, one that is false if the original is true, and true if the original is false. Linguistically, we can construct such propositions by prepending the phrase “It is not the case that …” to the beginning of any proposition. Thus:

- “It is not the case that Percy is a cat”

- “It is not the case that quail are a kind of lizard”

- “It is not the case that Rabelais was the author of both Gargantua and Pantagruel”

- “It is not the case that Sasha is a cat”

- “It is not the case that Timothy is a chinchilla”

These are simple propositions, but one of them is not as simple as it might be. The Rabelais sentence really indicates both that

- Rabelais is the author of Gargantua.

and that

- Rabelais is the author of Pantagruel.

Joining these elementary propositions together with “and” creates a single (linguistic) statement that asserts an underlying combination of the two (logical) propositions. Clearly, we could join the elementary propositions differently by saying, for example, “Rabelais is the author of Gargantua but not the author of The Decameron.” In either case, however, it becomes possible to speak of the connecting term as having a logic unto itself.

Let’s say I assert the following:

- Sasha is a cat and Timothy is a chinchilla.

Under what circumstances would we regard this statement as false? Clearly, it would be false if Sasha was a dog and Timothy a parakeet, but we would also consider it false if only one of those were true. That is, if Sasha is indeed a cat but Timothy is a parakeet, we would consider the entire proposition to be false.

Other connectives can have different logics. In the case of a disjunction (or), both “disjuncts” would have to be false for the compound proposition to be false.

- Sasha is a cat or Timothy is a chinchilla

Propositions embedded within “if…then” express the concept of implication: “If Rabelais was the author of Gargantua, then Rabelais was (also) the author of Pantagruel.” Despite the apparent simplicity of such phrases, the precise logic of such connectives can be more difficult to grasp. Our intuitive understanding is that the statement “if the Yankees win two more games, they will make the playoffs” has two main implications. First, if the statement is true and the Yankees win two more games, they will indeed make the playoffs. Second, if the Yankees win two more games, but don’t make the playoffs, then the statement was false. But what about the case where the Yankees don’t win? If the Yankees don’t win two more games, but slip into the playoffs anyway, was the original statement false? How about if the Yankees don’t win any more games, and don’t make the playoffs? Was the speaker lying? Intuition suggests, and propositional logic agrees, that an “if-then” statement is true, even when the first part is false.

We can break this down into four cases to see this more clearly.

- The Yankees do win, and they do make the playoffs. The speaker is correct, and the statement is true.

- The Yankees do win, but they don’t make the playoffs. The speaker is wrong, and the statement is false.

- The Yankees don’t win, but they make the playoffs anyway. The speaker is still correct, and the statement is true.

- The Yankees don’t win, and they don’t make the playoffs. By convention the statement is still true, because we can’t demonstrate its falsity.

Thus, the only way for an “if-then” statement to be false is for the first part to be true and the second part false.

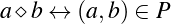

The last connective is a double implication, sometimes written “if and only if.” This is useful, almost stereotypical, for definitions. “A person is a bachelor if and only if he is an unmarried male.” “A number is a perfect square if and only if there is another number that, squared, gives you the first number.” “A faculty member can vote on this motion if and only if she is a tenured member of the faculty.” Some propositions with this connective have a double implication because they combine two separate implications. For example, “The Yankees will make the playoffs if and only if they win two more games” suggests two things. In this case, one is saying not only that “if the Yankees win two, they will make the playoffs,” but also that the only way the Yankees will make the playoffs is by winning two more games: “if the Yankees make the playoffs, they will (have won) two games.”

Using these basic semantic primitives, it becomes possible to construct more complicated propositions. “All of the seven dwarfs have a beard except for Dopey,” for example, can be built with six conjunctions and one negation:

- Grumpy had a beard

and - Sneezy had a beard

and - Sleepy had a beard

and - Happy had a beard

and - Doc had a beard

and - Bashful had a beard

and - Dopey did not have a beard

It is also possible to devise other connectives in terms of the ideas expressed above. Some logicians, for example, like to distinguish between inclusive or and exclusive or. These two concepts can be understood as the difference implicit in the following questions:

- Would you like milk or sugar in your coffee?

- Would you like beef or chicken at the banquet?

It is acceptable and even expected that one might answer the first question with “Both, please,” but not the second. In the first case, the answer “yes” includes the possibility of both. Similarly, a statement with an inclusive or is true “if and only if” the first disjunct is true, the second disjunct is true, or both are. This is the standard semantics of disjunction discussed above (no one would expect to be served two main courses). Similarly, a proposition with an exclusive or is true if and only if one or the other disjunct is true, but not both. The statement “Timothy is a chinchilla or Sasha is a cat” might therefore be either true or false, depending upon whether “or” is interpreted as inclusive or exclusive—again, we see the focus in mathematical expression on clear definitions to avoid confusion and ambiguity.

The aim, though, is not merely clarity but generality. As with syllogistic logic, we’d like to understand the general structure that governs the truth and falsehood of propositions. To achieve that, we introduce two syntactic changes. The first merely replaces the elementary propositions with propositional variables (by convention, capital letters). Thus:

- P

- = “Percy is a cat”

- Q

- = “Quail are a kind of lizard”

- R

- = “Rabelais was the author of both Gargantua and Pantagruel”

- S

- = “Sasha is a cat”

- T

- = “Timothy is a chinchilla”

The second syntactic change replaces the connectives with symbols:

- ¬ means “not”

- ∧ means “and”

- ∨ means “or” (and specifically, inclusive or)

- → means “if …then” or “implies”

- ↔ means “if and only if”7

The former change is mostly a matter of concision; if the elementary statements are long, it is simply more convenient to replace them with single letters. The rationale of the latter, however, arises because of the complexities of natural language. Earlier, we had negated phrases like “Percy is a cat” with “It is not the case that Percy is a cat.” The reason for such stilted phrasing becomes clearer when these statements are restated in more normative English:

- “Percy isn’t a cat”

- “Quail are not a kind of lizard”

- “Rabelais wasn’t the author of both Gargantua and Pantagruel”

- “Sasha isn’t a cat”

- “Timothy is not a chinchilla”

As natural as such transformations seem to a native speaker, it is actually rather difficult to define clear linguistic rules about how to convert a sentence into its negation. The negation of “No one entered the room” isn’t “No one didn’t enter the room,” but “Someone entered the room.”

Armed with this syntactic system, we can begin to extend and connect propositions into formulas. In propositional logic, a well-formed formula (abbreviated wff, and pronounced “woof”) obeys the following rules:

- Any propositional variable by itself is a wff.

- Any wff preceded by the symbol ¬ is a wff.

- Any two wffs separated by the symbol ∧ is a wff.

- Any two wffs separated by the symbol ∨ is a wff.

- Any two wffs separated by the symbol → is a wff.

- Any two wffs separated by the symbol ↔ is a wff.

- Anything that can’t be constructed according to these rules is not a wff.

Well-formedness is something like the “grammar” of propositional logic. A grammatical sentence might be false (“Trout live in trees”); an ungrammatical sentence (“In live trees trout”) is just gibberish.

All the following are wffs:

- P (by rule 1)

This just represents the original proposition “Percy is a cat.”

- Q (by rule 1)

Similarly, this just represents“Quail are a kind of lizard”

- ¬Q (by rule 2 from the previous)

This represents “It is not the case that quail are a kind of lizard,” or equivalently “Quail are not a kind of lizard”

- P →¬Q (by rule 5, from the first and third examples)

This represents “If Percy is a cat, then quail are not a kind of lizard.”

- ¬Q∨(P →¬Q) (by rule 4, from the third and fifth examples)

This represents “Either quail are not a kind of lizard or else if Percy is a cat, then quail are not a kind of lizard.”

The use of parentheses, which might be added to the list of well-formedness rules, allows us to group expressions into logical units in an unambiguous way. The formula A∧B∨C (A “and” B “or” C), for example, is ambiguous; it could mean either of two things:

- A∧(B∨C) : you get A, plus your choice of B or C. (Steak, with either baked potato or fries).

- (A∧B)∨C : you have your choice of either a combination of A and B, or you can have C. (You can have the burger and fries, or you can have the salad bar).

Having reduced propositions and their connectives to symbols, there’s nothing to prevent us from doing the same thing to the formulas in which they’re embedded. By convention, formulas are represented using lower-case Greek letters. Just as the Roman letters P or Q might stand for some longer elementary proposition, so ϕ or ψ might stand for a more complicated formula (e.g. ¬¬[(P ∨Q) →¬R]). This notation allows us to rewrite the syntactic rules above more tersely as:

- Any propositional variable by itself is a wff.

- If ϕ is a wff, so is ¬ϕ.

- If ϕ and ψ are wffs, so is ϕ ∧ψ.

- If ϕ and ψ are wffs, so is ϕ ∨ψ.

- If ϕ and ψ are wffs, so is ϕ → ψ.

- If ϕ and ψ are wffs, so is ϕ ↔ ψ.

- If ϕ is a wff, so is (ϕ).

It should be noted that there is no limit to the number of different well-formed formulas that can be created, since you can always add another symbol to the front or combine it with another formula.

Truth Tables

The goal, of course, is still to assess the truth or falsehood of propositions. Propositional logic allows us to do this by breaking complex, well-formed formulas into their constituent parts and assessing the consequences of each elementary statement for the truth or falsehood of the overall formula. One of the simplest methods for doing this involves the construction of a truth table. This is simply a representation, in tabular form, of all the possible truth values of the underlying propositions, with the implications for the formulas of interest. Since each proposition can only be true or false, the possibilities are often not difficult to list. (In fact, we did exactly that, although not in tabular format, in our discussion of the semantics of “if-then.”)

To begin with a very simple truth table, consider the truth value of the expression ¬ϕ as laid out in table 1.1. Right away, we can see that ϕ can only have two possible values, and that each of those two values will result in a particular value when negated. We can therefore “read off” the answer by looking up the initial conditions. The rest of the connectives can be similarly represented, as in table 1.2.

| Table 1.1: | Semantics of ¬ (not) |

| if ϕ is | …then ¬ϕ is |

| true | false |

| false | true |

| Table 1.2: | Semantics of ∧, ∨, →, and ↔ |

| ϕ | ψ | ϕ ∧ψ | ϕ ∨ψ | ϕ → ψ | ϕ ↔ ψ |

| true | true | true | true | true | true |

| false | true | false | true | true | false |

| true | false | false | true | false | false |

| false | false | false | false | true | true |

The real power of this representation becomes clear when we use it to map out the possible values of a syllogism. Here is an argument we presented earlier as a weakness of syllogistic logic. Slightly modified:

| Either Timothy or Sasha is a cat. | (1.34) | |

| Timothy is not a cat. | (1.35) | |

| ∴ Sasha is a cat. | (1.36) |

As a way of formalizing this argument, we note that statement 1.34 is equivalent to the statement “Timothy is a cat or Sasha is cat.” We can then represent the statement “Timothy is a cat” by the letter T, and “Sasha is a cat” by the letter S. The formal version of the argument becomes:

| T ∨S | (1.37) | |

| ¬T | (1.38) | |

| ∴ S | (1.39) |

There are now two underlying propositions (T and S), two premises defined in

terms of these prepositions, and one conclusion (which is actually one of the

underlying premises). We can now construct a truth table with these five columns:

| S | T | T ∨ S | ¬T | S |

| true | true | true | false | true |

| false | true | true | false | false |

| TRUE | FALSE | TRUE | TRUE | TRUE |

| false | false | false | true | false |

Recall our earlier discussion of validity. An argument is valid if and only if it is not possible (in any possible world) for the premises to be true and the conclusion false at the same time. This truth table lists all the possible states of the world—S is either true or false, and so is T (independently). We can thus confirm by inspecting the table above that both premises are true in only one of the four possible worlds (the one marked out in capital letters). In this same line, the conclusion is also shown be true. Therefore, in any possible world where the premises are true, so is the conclusion. The argument is demonstrated, using a rote method, to be valid.

While truth tables can be used to test any argument in propositional logic, they can become too cumbersome in some cases. A truth table that involves only a single variable can be represented with only two lines (as in table 1.1 or table 1.3). An only slightly more complex table involving two variables (as above) needs four lines to cover the four possible cases. However, a wff with only three prepositional variables would need, not four or six, but eight lines (four for the case where the third variable was true, four for the case where it was false), and in general, adding another variable will double the number of rows in the table. An argument that began with “One of the seven dwarfs (Grumpy or Sneezy or Sleepy or Doc, etc.) doesn’t have a beard” would take more than a hundred lines. An argument that began “one of the 191 UN member states…” would take more lines than there are protons in the universe.

Propositional Calculus

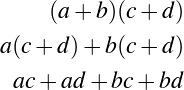

One way to make the system more tractable involves employing a system of inference rules—a method we have already encountered in our discussion of syllogistic logic. The idea, again, is to create a set of rules that can be generically applied to arguments with a particular structure. The propositional calculus describes a method of doing this with the particular goal of declaring a broad number of propositions to be formally equivalent. This not only reduces the overall complexity of the system, but makes the reduction itself a matter of rote substitution.

Two statements are said to be equivalent if they have the same truth value in all circumstances. For example, the formula ϕ is equivalent to the formula ¬¬ϕ, and indeed to the formulas ϕ ∧ϕ and ϕ ∨ϕ, as can be seen from the truth table in Table 1.3.

| Table 1.3: | Equivalence relationships |

| ϕ | ¬ϕ | ¬¬ϕ | ϕ ∧ϕ | ϕ ∨ϕ |

| true | false | true | true | true |

| false | true | false | false | false |

Having done this, we have also shown that the concepts of ϕ and of ¬¬ϕ have exactly the same (logical) meaning. In practical terms, this means that any time one encounters the (sub)formula ¬¬ϕ, one can replace it with the simpler ϕ (and vice versa, of course).

So a statement like

| (¬¬(P ∨Q)) → R | (1.40) | |

| (1.41) |

can be simplified by replacing ¬¬(P ∨Q) with its equivalent P ∨Q, giving us

| (P ∨Q) → R | (1.42) | |

| (1.43) |

The usual symbol for this replacement is ⊢. This observation can thus be formalized as the following two inference rules:

| ϕ ⊢¬¬ϕ | (1.44) | |

| ¬¬ϕ ⊢ ϕ | (1.45) |

We can extend this notion slightly to include the idea that if B is true whenever A is true, A ⊢ B, even if A and B aren’t actually equivalent. For example, we know that if P is true, P ∨ Q must be true no matter what Q entails, and therefore P ⊢ P ∨ Q.

We can also use inference rules like these to define connectives in terms of other connectives, giving us a simpler basis for the analysis of the logic scheme itself. The ↔ connective, for example, can be shown to be equivalent to a pair of directed implications (→) as in Table 1.4. Finally, and perhaps most importantly, we can show that specific (structural) inferences are valid.

| Table 1.4: | Redefinition of ↔ |

| ϕ | ψ | ϕ ↔ ψ | ϕ → ψ | ψ → ϕ | (ϕ → ψ)∧(ψ → ϕ) |

| true | true | true | true | true | true |

| false | true | false | false | true | false |

| true | false | false | true | false | false |

| false | false | true | true | true | true |

A particular example of this last kind of inference rule is modus ponens. In plain English, this is the structure of such arguments as:

| If this is Monday, I have a night class to teach | (1.46) | |

| This is Monday | (1.47) | |

| ∴ I have a night class to teach | (1.48) |

Now that we know the notation, we can simply say:

| P → Q | (1.49) | |

| P | (1.50) | |

| ∴ Q | (1.51) |

or, more tersely, [(P → Q)∧P] ⊢ Q. (If you have the expression “P implies Q” and you have P, you can replace the whole thing with Q.)

Here are some other examples of commonly used inference rules:

- (modus tollens) (P → Q)∧¬Q ⊢ (¬P)

- (hypothetical syllogism) (P → Q)∧(Q → R) ⊢ P → R

- (disjunctive syllogism) (P ∨Q)∧¬Q ⊢ P

- (and-simplification) (P ∧Q) ⊢ P

- (De Morgan’s theorem) ¬(P ∨Q) ⊢ (¬P ∧¬Q) and ¬(P ∧Q) ⊢ (¬P ∨¬Q)

- (commutivity) P ∨Q ⊢ Q∨P and P ∧Q ⊢ Q∧P

- (contrapositive) P → Q ⊢¬Q →¬P

We could, of course, provide detailed justifications for why these are valid (perhaps using truth tables). In the interest of brevity, we’ll leave that as an exercise for the reader. But we can’t pass so quickly through this list without noting that the development of these inference rules stands among the more notable achievements of mathematical logic in the last two hundred years or so. They are also very commonly used in mathematical arguments. We won’t belabor the point, but it might be useful to meditate on these insights in greater detail once we’ve finished our overview.

It is possible to define inference rules in terms of other inference rules, and indeed, to prove the validity of any particular inference rule using one or more of the others. Mathematicians have even managed to demonstrate that only one inference rule is actually necessary (modus ponens), and that all others can be derived from it. As elegant as this insight may be, such a restricted rule set can make arguments and proofs extremely confusing. Even so, mathematicians sometimes differ over the number of inference rules they will accept in a specific formulation of propositional logic.

Propositional logic has proven itself to be a powerful tool for thinking about separate propositions. Unfortunately, it suffers from the same sort of limitations that syllogistic logic does—there are obviously valid arguments that cannot be proven in propositional logic (including, amazingly, many of the syllogisms we studied earlier). In particular, propositional logic lacks the machinery necessary to peer inside a simple statement, such as a generalization, and see how the generalization applies in specific instances. Where syllogistic logic could not deal with “or,” propositional logic cannot deal with “all” or “some.”

1.2.3 Predicate Logic

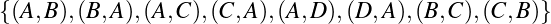

Predicate logic is a hugely influential system designed to remedy this. It introduces the notion of an expression (called a predicate) that expresses an incomplete concept (e.g. “…is a cat” or “…is blue”). It then puts forth a semantics for reasoning about concepts that may apply to some part of the world of discourse but not others. (The term “predicate” is borrowed from grammar, where it refers to the part of the sentence that isn’t the subject.)

The logics we’ve dealt with so far make it easy to deal with complete statements like “Bart Simpson is left handed” (which happens to be true). Predicate logic introduces incomplete statements in order to allow us to work with statements like “x is left-handed” where x is an unspecified variable. The advantages of this become clearer when we try to discuss a particular set of logical statements in which the properties of a set of particular values is in question.

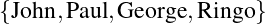

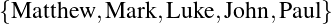

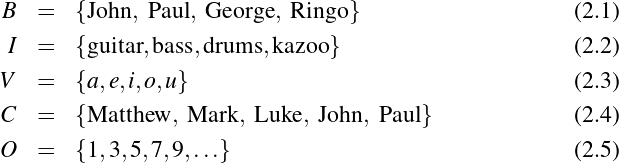

Suppose, for example, that we want to discuss—in an extremely formal and stilted way—the handedness of the members of The Beatles. We could begin by assigning each member of the band to a constant (that is, to a symbol, the value of which we do not intend to change). Thus a,b,c and d become John, Paul, George and Ringo, respectively. We’ll also replace the partial statement “…is left-handed” with a predicate variable: L(x). In this latter formation, we refer to x as the argument or parameter of L.

Armed with this system, we can now assert that L(b) and L(d), which is simply a more concise way of saying that “b (Paul) is left-handed,” and “d (Ringo) is left-handed.” Similarly, we can assert ¬L(a) and ¬L(c) (i.e. that neither John nor George is left-handed). And, of course, the connectives have their usual semantics: [L(d)∨L(a)]∧L(b) means that “Ringo or John is left-handed, and Paul certainly is.”

Predicate variables need not take only a single argument. Some predicates are inherently relational or comparative, as when we assert that “x is older than y.” We could formalize this as the two-place predicate variable O(x,y), and could use this to express ideas like:

| O(a,b) | (John is older than Paul) | (1.52) |

which is true, or

| O(c,d) | (George is older than Ringo) | (1.53) |

which is false.

We can now introduce two new symbols—called quantifiers—that only have meaning in association with incomplete concepts (that is, with predicate variables). ∀ means “all” or “every,” as in syllogistic logic, and ∃ means “some.” More explicitly, the expression ∀x : ϕ is interpreted as “for all/every/each possible value of x, ϕ is true.” ∃x : ϕ means that “for at least one value of x, ϕ is true” So of the two statements following

| ∃x : L(x) | (1.54) | |

| ∀x : L(x) | (1.55) |

statement 1.54 (“Someone [some Beatle] is left-handed”) is true, because for at least one value of x—either b or d—the expression is true, but statement 1.55 (“Everyone [every Beatle] is left-handed”) is false, because not all the Beatles are left-handed; only some of them are. Similarly, the idea that “no Beatle is older than himself,” which is certainly true, can be written as:

| ∀x : ¬O(x,x) | (1.56) |

We can also express the idea that Ringo is the oldest Beatle (“there does not exist a Beatle who is older than Ringo”):

| ¬∃x : O(x,d) | (1.57) |

We can even express the earth-shattering idea that there is an oldest Beatle:

| ∃y : [¬∃x : O(x,y)] | (1.58) |

(“There is an unnamed individual y such that there does not exist any individual Beatle x who is older than him.”)

Adding a quantifier does not resolve the variable inside the predicate variable (the x in L(x) is still unknown), but it does have an effect on the way we understand that variable. We can therefore distinguish, technically speaking, between two kinds of variables. A free variable is a variable that represents an incomplete and underdefined thought, like the x in “x is left-handed.” But one can complete the thought by binding the variable with something like “for all” or “for some.” Thus we would say that the x in “for some x, x is left-handed” is not a free variable, but a bound variable.

We can pull all of this into a set of rules for what constitutes a well-formed formula in predicate logic. It is, in essence, the same set of rules we established for propositional logic, but slightly enhanced:

- Any constant, variable, propositional variable, or predicate variable is a wff. (And any variable in this context is a free variable).

- If ϕ is a wff, so are (ϕ) and ¬ϕ.

- If ϕ and ψ are wffs, so are ϕ ∧ψ, ϕ ∨ψ(ϕ), ϕ → ψ, and ϕ ↔ ψ.

- Nothing else is a wff (except as noted below).

To these, we can add the following two rules:

- if ϕ is a wff, and α is a free variable in ϕ, then ∀α : ϕ is a wff (and α is no longer free, but bound).

- if ϕ is a wff and α is a free variable in ϕ, then ∃α : ϕ is a wff (and α is no longer free, but bound).

Messy, but it serves to capture our intuition of what “all” and “some” really mean.

Nonetheless there is a lot of potential confusion over the exact interpretation of quantifiers, sometimes in areas where natural language is itself ambiguous. For example, these two statements have different truth values in our Beatles example:

| ¬∃x : L(x) | (1.59) | |

| ∃x : ¬L(x) | (1.60) |

Formula 1.59 asserts that “it is not the case that a left-handed Beatle exists,” or equivalently, that no Beatle is left-handed. This is obviously false since Paul does exist (coded messages on album covers notwithstanding). On the other hand, formula 1.60 asserts that “there exists a Beatle who is not left-handed,” which is true. As another example, formulas 1.61 and 1.62 are equivalent (and both false), since they both assert the same thing (that no Beatle is left-handed):

| ¬∃x : L(x) | (1.61) | |

| ∀x : ¬L(x) | (1.62) |

(Equation 1.61 states that no left-handed Beatle exists, the second states that all Beatles are not left-handed.)

A more significant issue is that of ambiguity in how quantifiers interact. Using the predicate variable A(x,y) to signify that “x admires y,” how would the sentence “everyone admires someone” be written? Perhaps surprisingly, this sentence (in English) has two entirely separate and distinct meanings. The first meaning is that that there is some lucky individual who is universally admired—a global hero or heroine (a statement that is probably not true.) The second is that every person has their own individual person whom they admire, even if that hero is different for every individual. In formal logic, these two interpretations would be written respectively as

| ∃x : ∀y : A(y,x) | (1.63) | |

| ∀y : ∃x : A(y,x) | (1.64) |

or, in natural language, “there is some individual who is admired by everyone” versus “everyone has an individual whom they admire.” It is actually not possible to preserve the ambiguity within the framework of standard predicate logic—a feature that is seen by most mathematicians as a strength, since it reduces confusion and misunderstanding.

Predicate logic has the additional advantage of subsuming both propositional and syllogistic logic. It is, in fact, very easy to represent traditional Aristotelian syllogisms in this framework. The four types of Aristotelian statements can be written as follows (we use the formalization X(x) and Y (x) to represent groups X and Y, respectively):

| Type | Example | ... becomes |

| A: | All X are Y | ∀x : X(x) → Y (x) |

| E: | No X are Y | ∀x : X(x) →¬Y (x) |

| I: | Some X are Y | ∃x : X(x)∧Y (x) |

| O: | Some X are not-Y | ∃x : X(x)∧¬Y (x) |

We can thus use the truth-generating machinery of predicate logic (which incorporates the machinery of propositional logic) to analyze syllogistic statements.

We could also easily set forth the rules of predicate calculus. All the rules we’ve already seen for propositional calculus (modus ponens, modus tollens, etc.), plus any valid argument in propositional calculus, would remain valid in the extensions. The difference is exactly in the extensions; as the syntax and semantics are extended to incorporate quantifiers, new rules of inference are added to deal with them.

1.2.4 Possible Other Logics

Predicate logic, of course, has its own limitations (the reader is undoubtedly detecting a pattern here). The underlying constants—the object of discussion—can be generalized or quantified, but not the predicates themselves. Predicate logic is entirely unable to represent, for example, the statement, “Anything you can do, I can do better” or “I’m not the best in the world at anything” (“For any property P , there is a person x who is better at P than I am”). A logical system that allows quantification of variables but not predicates is referred to as a first-order logic. Second-order logic (or more generally higher-order logic) extends the system to allow quantification of predicates about variables. Third-order logic permits quantification of predicates about predicates about variables, and so forth. So the statement “For every property P , it’s true for a,” (or more loosely, “a can do anything.”):

| ∀P : P(a) | (1.65) |

is syntactically and semantically legal only in second-order logic or higher.

Second order logic is useful when you want to reason about not just things, but properties of things. For example, Gottfried Wilhelm Leibniz (1646–1716) put forth (as one of his famous metaphysical principles), the so-called “identity of indiscernibles,” which states that two objects are identical if they have all of their properties in common. We can express this idea in second-order logic (but not first-order logic) as:

| ∀x : ∀y : [x = y ↔∀P : (P(x) ↔¬P(y))] | (1.66) |

Similarly, we can express the related idea that if x and y are different objects, then x has a property that y doesnt:

| ∀x : ∀y : [x≠y ↔∃P : (P(x)∧P(y))] | (1.67) |

The difference is subtle, but powerful : in first-order logic, one can quantify over objects (x, y) but not over predicates (P ).

Modal logic is an extension of logic to cover the distinctions between certainty and contingency. Experimental results are usually contingent truths (the world might easily have come out some other way), while rules of inference are necessary truths. In modal logic, the symbol ♢ϕ is used to represent the idea that ϕ is possible, while □ϕ represents the idea that ϕ is necessary. They are typically related by the following equivalence and rule of inference:

| ♢ϕ ↔¬□¬ϕ | (1.68) |

or, informally, ϕ is possibly true if and only if it’s not necessarily untrue. That is, “It’s possible that Jones is the murderer, if and only if it’s not necessary that he’s innocent,” or, “If it is not possible that Jones is the murderer, then he is necessarily innocent,” or

| ¬♢ϕ ↔□¬ϕ | (1.69) |

We can, of course, prove that these two formulations are equivalent using the machinery developed for propositional logic.

The idea of modal logic has been extended to cover formalisms involving time (temporal logic), of obligation or morality (deontic logic), and of epistemology (epistemic logic). These are fascinating systems which we will, in the spirit of humility, leave to specialists. That such systems exist as active fields of study and areas for critical thought, though, indicates the overall vitality of the subject. It is likely that many more systems of logic will be developed as people find and formalize additional areas of inquiry. In all cases, however, the major questions are the same: “What can be expressed?” “What can be proven?” “How can things be proven?” and (perhaps most importantly) “Why should we trust the proofs?” We’ve spent most of this chapter considering the first three questions. The fourth question is important enough to merit a section of its own.

1.3 The Significance of Logic

1.3.1 Paradoxes and the Logical Program

Grelling-Nelson paradox

Earlier, in our discussion of paradoxes, we noted that a paradox usually indicates that something is deeply wrong—not a misapplied method or a mistake, but a flaw in the assumptions and definitions upon which the entire formulation rests. In the late-nineteenth and early-twentieth centuries, it became obvious to practicing mathematicians that many of the intuitive concepts underlying mathematical practice were actually paradoxical. One example of this is the Grelling-Nelson paradox, which addresses the relationship between the act of naming and the world.

Kurt Grelling (1886–1942) and Leonard Nelson (1882–1927) observed that, at least in some instances, the very act of defining things can create a paradox—which is problematic, given that definitions represent one of the major ways human beings divide up the world. They defined a new pair of words, “autological” and “heterological,” as descriptions of adjectives. An “autological” adjective is one that describes itself. For example, the word “terse” is itself terse, the word “pentasyllabic” is itself pentasyllabic, “unhyphenated” is unhyphenated, and “English” is itself English. An adjective is “heterological” if and only if it is not autological; for example “Japanese,” “monosyllabic,” and “flammable” are not autological and therefore are heterological. Because this definition is an explicit division, every adjective is either autological or heterological.

But what of the word “heterological” itself? If it is itself autological, then it must describe itself and therefore be heterological. Conversely, if it is heterological, then it does describe itself and is therefore autological. Either way, it must (impossibly) both be and not be heterological, yielding the paradox.

This is obviously a close relative of the Barber paradox discussed earlier. As with the Barber paradox, the solution is to reject an assumption. But in this case, the assumption that would need to be rejected is the assumption that one can simply make up definitions and divide the world. The very act of defining would seem to introduce paradoxes into our reasoning.

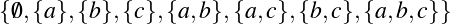

Russell’s Paradox

Similar paradoxes were being discovered relating to many aspects of (then-current) mathematics. In particular, the theory of “sets” at that time relied on an informal understanding that a set was just a collection of objects, and that for any property one might care to define, one implicitly defined the set of all objects with that property. Since we know about the idea of “red,” we also know about the set of things that are red. Since we know about even numbers, we know about the set of numbers that are even. This notion was formalized by many turn-of-the-twentieth-century mathematicians, most notably Gottlob Frege (1848–1925).

Unfortunately, this naive notion of a set generates almost exactly the same paradox. Known as Russell’s paradox (after the discoverer, Bertrand Russell, 1872–1970), it involves defining, not a new word, but a new property: that of “not being a member of itself.” For example, the set of all things that are not an elephant would include the authors, the reader, the Eiffel tower…and of course that set itself. Similarly the set of all sets (the set of all things that are sets) is a set, and the set of all mathematical concepts is a mathematical concept. On the other hand, the set of all weekdays isn’t itself a weekday.

But what happens when we ask about the set of all sets that are not members of itself? Is this set a member of itself?

The result is a paradox. If the set is a member of itself, then it is not a member of itself. If it is not a member of itself, then it must be a member of itself. As with the earlier paradoxes, it indicated to the mathematicians at the time that there was something fundamentally wrong with our naive formulation of set theory.

But if set theory does not follow our intuitions, what else is likely to break? What about the mathematical results that depend upon the ideas and concepts of set theory? How far does the rot spread? If paradox is a sign that we don’t understand what we’re doing, then a paradox at the heart of mathematics is a problem. For mathematics to progress as a discipline, it needed to be paradox-free. The solution, it seemed, was to define as much of mathematics as possible in terms of a few simple and fundamental principles that could be proven valid and free from paradox. Proofs could then be put on a sound theoretical basis by justifying them in terms of this minimal and rigorous set of assumptions whose truth was beyond realistic question. Mathematics would thus be “axiomatized” and formally validated using specific, valid, logic schemes.

1.3.2 Soundness, Completeness, and Expressivity

Of course, this project would only work if the logic employed was up to the task. In particular, it needed to be sound, complete, and expressive.

We’ve discussed expressivity at some length; there are some statements that simply can’t be made, let alone analyzed, in some logics. A logic system capable of supporting all of mathematics would have to be able to express all of mathematics. At a minimum, it would have to be able to express arithmetic. That’s the main reason why syllogisms aren’t used in formal mathematics much any more—it’s too hard even to express simple arithmetic concepts (like the “story problems” one encounters in primary school).

A logical system is sound if and only if every argument provable in the system is also valid. In other words, if it can be proved within the system that something is true, then that something is actually true. A system is similarly complete if and only if everything true is provable in the system. Thus a sound system does not allow one to prove things that aren’t true, and a complete system will not allow unprovable truths to hide in the corners of the system.

Syllogistic logic provides a good example of this. It can be proven (though we won’t, for the sake of brevity) that the “standard” syllogistic logic is both sound and complete. But let’s suppose that some mad mathematician wanted to incorporate the following argument as a legitimate structure (we’ll call it the *Baloney syllogism):8

| Major premise–I | No X are Y | (1.70) |

| Minor premise–I | No Y are Z | (1.71) |

| Conclusion–I | ∴ No X are Z | (1.72) |